Startup CTO's Guide to Preventing Downtime

Practical tips for engineering teams to ensure service reliability and trust.

Founder & Developer @ Mergent

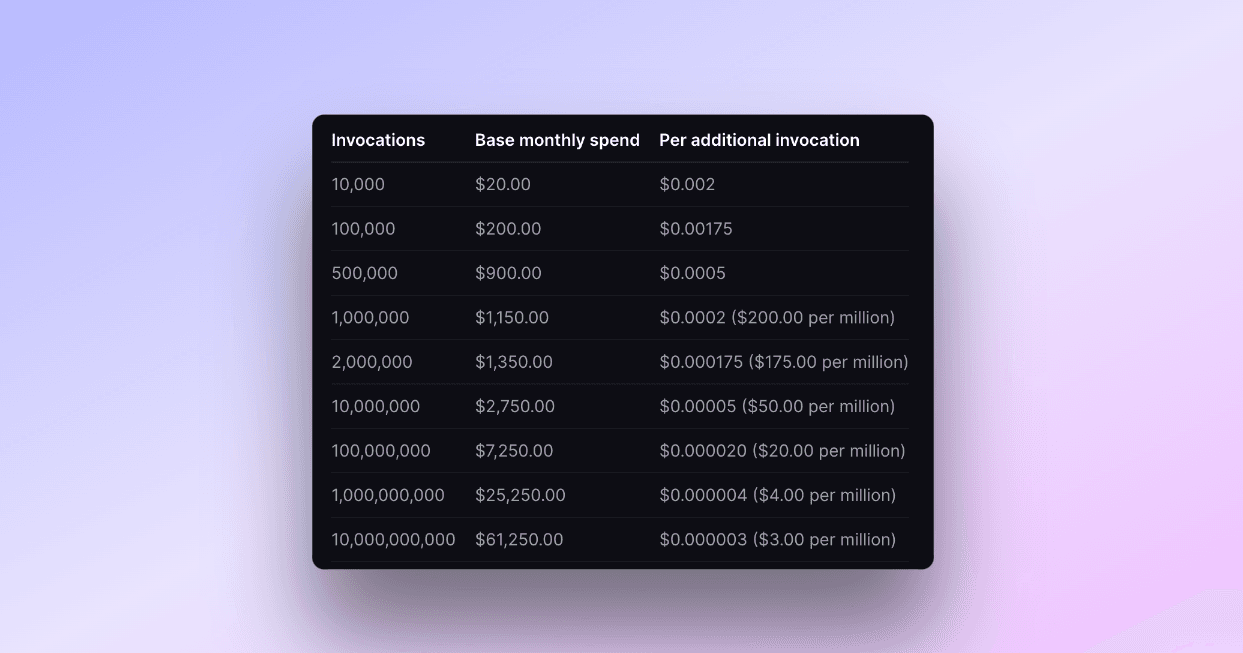

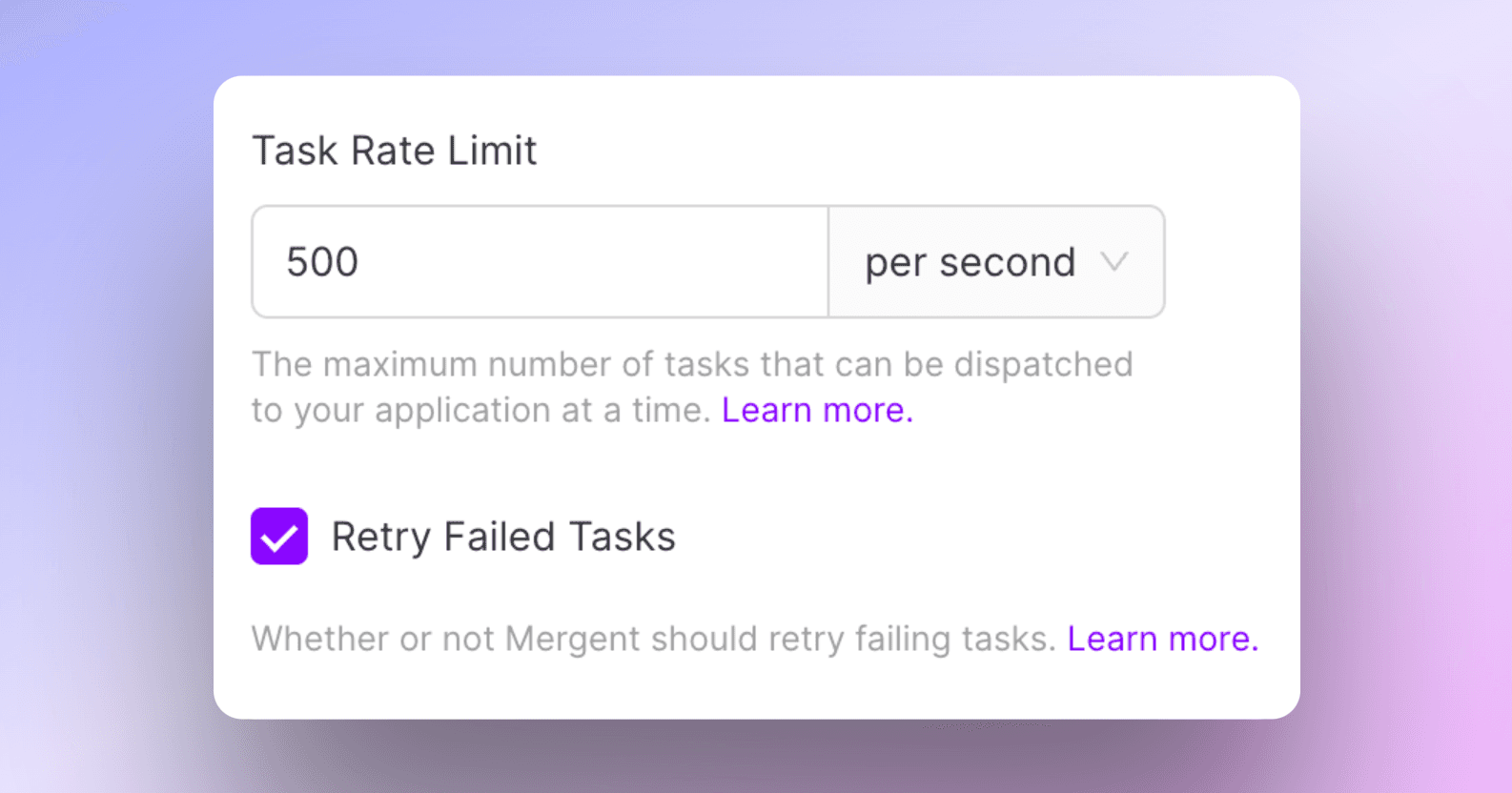

Mergent is a task queue API that is designed to operate reliably at high-scale.

Naturally, we spend a lot of time trying to prevent downtime, so I wanted to provide some terms and concepts that could be useful for many of you.

Let’s dive in.

SLAs, SLOs, and SLIs

First, some common terminology:

SLAs (Service Level Agreements) are contractual commitments that set the performance expectations for your service. Breaching these is not merely an operational failure; it's a breach of contract, which means it costs you money.

SLOs (Service Level Objectives) are internal goals that act as your performance indicators, triggering alerts before a situation turns dire.

SLIs (Service Level Indicators) are the actual metrics—latency, error rates, transaction volumes, etc.—that you should be constantly monitoring.

I won’t talk about these acronyms much more for the rest of this post, but you should definitely understand them. The Google SRE book (which I cannot recommend enough) has an entire chapter on this if you want to read more.

The Cost of Reliability

Aiming for "five nines" (99.999%) of uptime isn't just an engineering challenge; it comes with a big financial cost. More reliability means more engineering resources, and it will inflate your cloud bills significantly.

The key is to find the sweet spot between cost and reliability. It's not just about resource allocation; it's a risk assessment game. How far can you push the envelope without suffering significant business impact (i.e., before you breach your SLAs)?

Architectural Choices & Scaling

Your decisions on system architecture lay the groundwork for how reliably your service will operate and scale.

For example, how do your systems scale? What scales horizontally vs. vertically? For instance, APIs written in languages like Go or Node.js might scale horizontally with ease, while databases like PostgreSQL require vertical scaling(*).

Another layer of sophistication comes with auto-scaling and serverless, which help you manage peak loads and reduce resources during lean times. If you can, I’d recommend enabling auto-scaling for your cloud services to get a quick win.

Monitoring and Alerting

No matter how robust your architecture is, or how well you’ve planned, without comprehensive monitoring you're navigating through summer in SF-level fog.

Whatever tools you choose for monitoring—whether Grafana, Prometheus, or Datadog—is less important than how they collaborate to provide a coherent alerting mechanism.

Setting the right alert thresholds is an art; too lax and you miss incidents, too stringent and you’ll drown in false alarms at 3 AM (trust me on this).

Preparing for the Inevitable Downtime

I hate to say it, but outages will happen. The key is to have a well-defined incident response playbook and effective communication channels.

Transparency during incidents fosters customer trust. And, after resolving any crisis, a detailed postmortem helps identify the root causes and preventive measures, turning each crisis into a learning opportunity.

PagerDuty and Atlassian have both published trimmed-down versions of their incident response playbooks that are perfect for you to source some inspiration (okay, fine, copy/paste into your team’s Notion…) as you build your own playbook.

Final Words

It’s important to note that service reliability is not the goal; it's an ongoing process. The real goal is to build a culture where reliability is the entire team’s business.

Good luck!